Marketers can use A/B testing as their most powerful testing method. Many people make mistakes when attempting A/B testing because they misunderstand its proper application. The data-driven decision-making process which Shopify store owners and CRO specialists want to achieve will create multiple experiments which.

Your button color changes and hero image swaps will not help you increase your revenue. The testing process which most companies perform will lead to better results when they stop making common A/B testing errors. Many companies conduct tests with great effort yet their results stay unchanged because they repeat the same A/B testing mistakes which they should have avoided. The process of creating successful experiments depends on executing complete CRO audits which reveal the actual areas of testing friction before researchers start their experiments.

Experimental research fails because researchers choose improper testing methods instead of selecting useful testing methods. This guide will show you the reasons why companies depend on experiments to test their growth potential while highlighting the expenses incurred through improper testing techniques which emerge from gut instinct that pretends to be scientific evidence. The training program teaches you the common A/B testing mistakes and why A/B tests fail but also shows you how to establish a dependable testing process which produces consistent successful outcomes for your business.

Why A/B Tests Fail More Often Than You Think

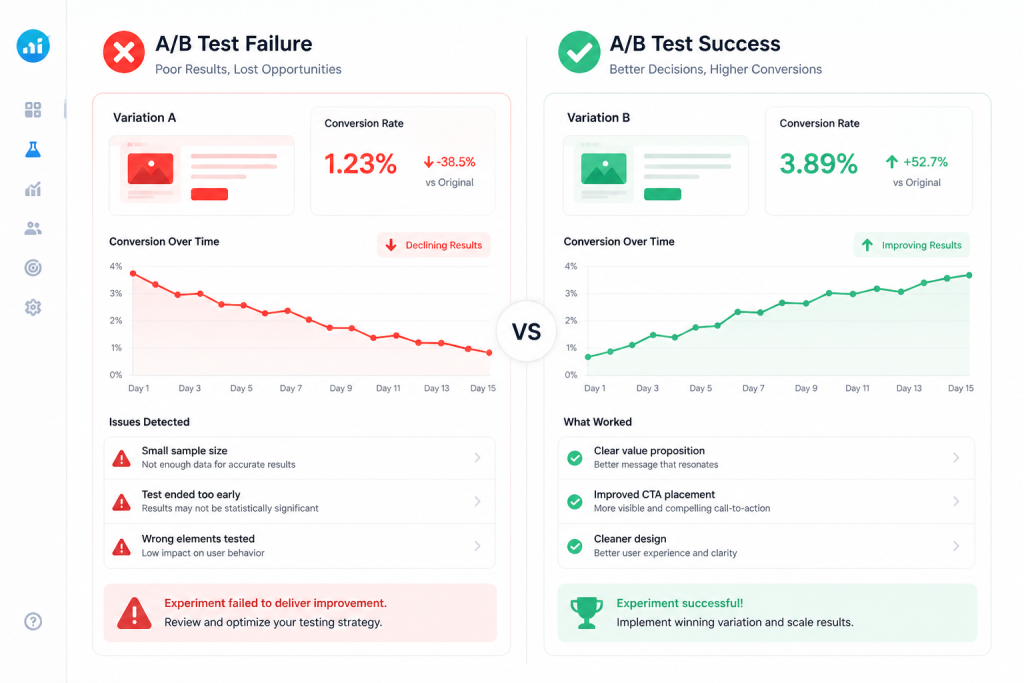

The reality of conversion rate optimization is humbling: many A/B tests yield no statistically significant difference, or worse, a ‘false winner.’ When you find yourself asking, “why my A/B test is not working,” the answer usually isn’t the creative, it’s the execution. To find the best A/B testing tools of Shopify stores, one must first understand that a tool is only as good as the person wielding it. Poor execution often stems from a lack of proper planning and a rush to get results.

Running an experiment without a structured hypothesis is like driving without a map; you might move, but you won’t know where you’re going. Furthermore, A/B testing errors frequently occur during the data interpretation phase. If you don’t account for external factors like a sudden holiday sale or a technical glitch on mobile devices your data becomes “noisy” and unreliable. Many teams also suffer from technical mistakes, such as “flicker” (where the original page shows for a split second before the variation), which ruins the user experience and biases the results. Understanding why A/B tests fail is the first step toward moving from “guessing” to “knowing.”

Testing Without a Clear Hypothesis

A hypothesis isn’t just a guess; it’s a proposed explanation based on limited evidence. Testing “to see what happens” is a waste of traffic. Without a measurable goal (e.g., “Increasing the font size of the price will reduce cart abandonment by 5%”), you cannot truly validate your findings.

Running Tests Without Enough Traffic

This is a mathematical wall many small stores hit. If you only have 100 visitors a week, achieving statistical significance is nearly impossible within a reasonable timeframe. Small sample sizes lead to massive fluctuations, making it look like you have a winner when you actually just have a random streak of luck.

The Most Common A/B Testing Mistakes to Avoid

To truly master growth, you must navigate around the A/B testing mistakes to avoid. If you follow a step by step A/B testing protocol, you can bypass the “rookie errors” that drain budgets and demotivate teams. Here are the core common A/B testing mistakes that plague even experienced marketers.

Mistake 1: Testing Too Many Variables at Once

This is known as the “spaghetti approach” throwing everything at the wall to see what sticks. If you change the headline, the CTA button, and the background image all in one variation, and that variation wins, you have no idea which change caused the lift. This prevents you from gaining insights you can apply to other parts of your site.

Mistake 2: Ending Tests Too Early

Patience is an ethic in CRO. It is tempting to stop a test the moment you see a green “95% significant” bar in your dashboard. However, ending tests too early leads to false positives. Data often regresses to the mean. If you don’t let the test run for at least one or two full business cycles (usually 7–14 days), you might be looking at a temporary trend rather than a permanent improvement.

Mistake 3: Ignoring Statistical Significance

Statistical significance tells you how likely it is that the difference in performance between two versions is not due to chance. Ignoring this leads to A/B testing errors where you implement a “winning” change that actually does nothing or harms your conversion rate long-term.

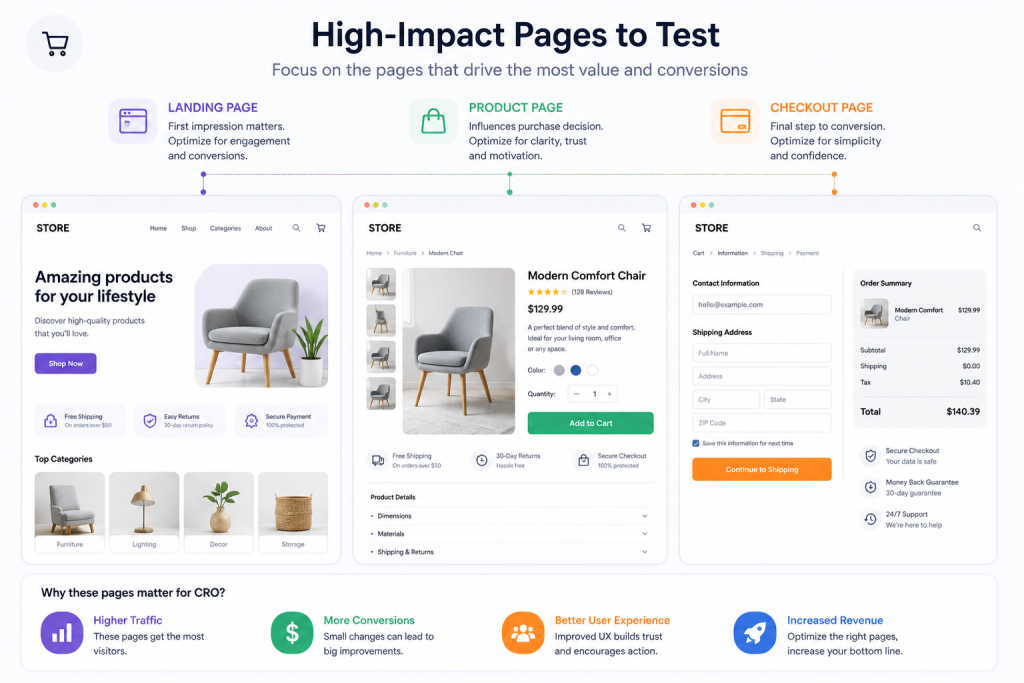

Mistake 4: Testing the Wrong Pages

Testing the “About Us” page when your “Checkout” page is leaking money is a classic strategic error. Focus on high-impact areas where the traffic volume is high enough to produce results quickly.

Mistake 5: Not Tracking the Right Metrics

Focusing on vanity metrics, like “clicks,” can be misleading. A change might increase clicks but decrease actual purchases. Tie your primary metric to your bottom line, such as Revenue Per Visitor (RPV) or Average Order Value (AOV).

Why Your A/B Test Is Not Working

When implementing A/B Testing for Shopify, many users get frustrated when their results are “inconclusive.” If you are wondering why my A/B test is not working, it usually boils down to three environmental factors: traffic, time, and the underlying user experience.

Your Sample Size Is Too Small

Mathematics doesn’t care about your marketing goals. If you don’t have enough users flowing through the funnel, the “noise” of individual behavior overrides the “signal” of the test. You need a large enough sample size to ensure that your results are statistically reliable.

Your Test Duration Is Too Short

User behavior changes depending on the day of the week. Someone shopping on a Monday morning at work has a different mindset than someone browsing on a Saturday night. If you only run a test for three days, you are only capturing a slice of your audience’s behavior.

Your Website Has UX Problems

If your site takes 10 seconds to load or the mobile menu is broken, an A/B test on a button color won’t save you. Fundamental UX problems act as a “ceiling” for your conversion rate. You must fix the foundational issues before fine-tuning with experiments.

How to Fix A/B Testing Mistakes (Practical Solutions)

To move from “guessing” to “growing,” you need a repeatable framework that eliminates human error. If your experiments have felt like a shot in the dark, implementing these five practical solutions will transform your results. For many brands, this is the stage where they look into shopify CRO services to ensure their technical foundation is as solid as their strategy.

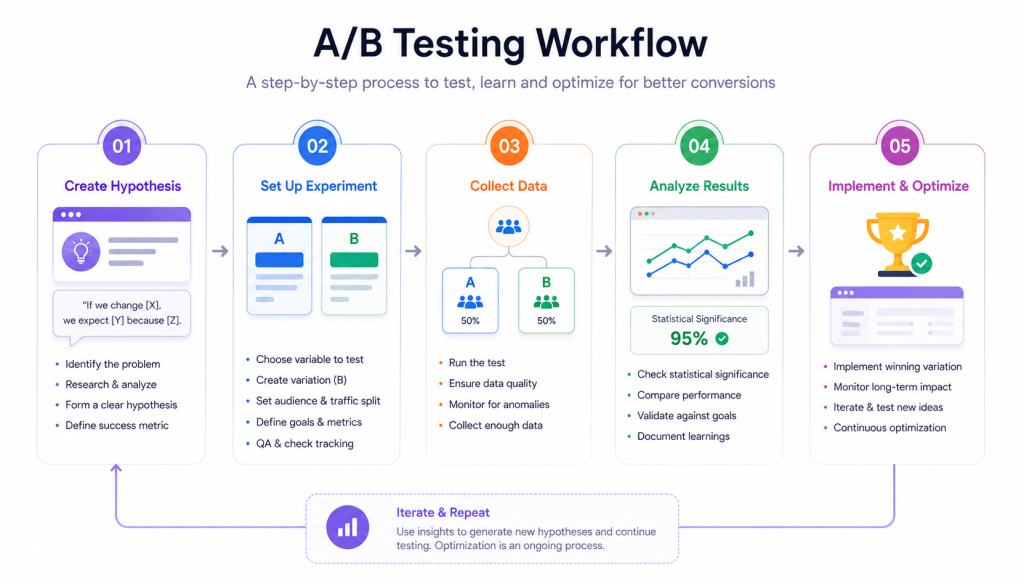

Solution 1: Start With a Clear Hypothesis

A weak test starts with “I want to see if this works.” A strong test starts with a structured observation.

- Instead of: “Change the CTA color to green.”

- Use: “Because heatmaps show users are scrolling past the CTA without noticing it, changing the button color to a high-contrast green will increase Click-Through Rate (CTR) by 15%.” This ensures that even if the test “fails,” you’ve learned something specific about your audience’s visual hierarchy.

Solution 2: Test One Variable at a Time

To how to fix A/B testing mistakes, you must embrace isolation. If you change the headline, the image, and the price at the same time, you create “confounded” data. By isolating changes, you can confidently attribute a lift in sales to a specific element, allowing you to double down on that strategy across your entire site.

Solution 3: Calculate Proper Sample Size

Before you push a test live, use a statistical power calculator. You need to know exactly how many visitors are required to reach a valid conclusion. If your traffic is low, don’t test tiny tweaks (like button shades); test big, “disruptive” changes that are likely to cause a larger, more measurable impact.

Solution 4: Run Tests Long Enough

Data needs time to breathe. A common mistake is stopping a test because version B is winning on Tuesday, only to find that version A wins over the weekend. A minimum duration of 1–2 weeks is the industry gold standard. This accounts for different buyer personas who shop at different times of the week.

Solution 5: Focus on High-Impact Pages

Don’t waste traffic on your “Terms and Conditions” or “Contact Us” pages. If you want to see a real ROI, focus your efforts where the money is:

- Product Pages: Testing “Buy Now” vs. “Add to Cart.”

- Checkout Pages: Reducing form fields to lower friction.

- Landing Pages: Aligning ad copy with page headlines.

By applying these practical fixes, you ensure that every experiment you run provides a clear, actionable insight for your business.

A Simple A/B Testing Checklist for Better Results

To ensure you avoid A/B testing mistakes in the future, use this checklist before hitting “Publish” on any experiment. This is essential for effective landing page optimization.

- Is the hypothesis clear and tied to a specific user behavior?

- Is only one variable being changed (or are you okay with a compound result)?

- Is the tracking set up correctly in both the testing tool and Google Analytics?

- Have you calculated the required sample size and duration?

- Does the test account for at least two full business cycles (14 days)?

- Is the test running on all devices (or intentionally excluded)?

- Have you performed a QA check to ensure the page isn’t “flickering” or broken?

By consistently checking these boxes, you significantly reduce the chance of A/B testing mistakes to avoid and increase the likelihood of finding a true winner.

Avoid A/B Testing Mistakes to Improve Results

The process of A/B testing requires scientists to execute a complete scientific investigation rather than operating as an automated testing method. The majority of Shopify store failures occur because store owners make testing errors which could have prevented A/B testing mistakes in their content through complete experiments and testing according to their established testing procedures and by understanding the statistical methods which determine test results.

Your business will achieve sustainable success when your team develops capabilities to learn from every situation instead of pursuing victories. The effective execution of conversion rate optimization requires businesses to implement ongoing data analysis procedures which transform every setback into valuable knowledge that produces substantial future achievements. Testing your concepts through appropriate methods will eliminate uncertainty while your conversion rates will start to improve.

Which tests do you have running that appear to be stuck at this moment or which experiments do you have scheduled as your first attempt to test your Shopify store?